¶ Interpretable Anomaly Detection with DIFFI

Depth-based Isolation Forest Feature Importance (DIFFI) is a method designed to enhance the interpretability of the popular Isolation Forest algorithm for anomaly detection. It was introduced in the paper Interpretable Anomaly Detection with DIFFI: Depth-based Feature Importance of Isolation Forest by Carletti, Terzi, and Susto (2023).

Code and datasets from the paper are publicly available in the official GitHub repository.

¶ Model description

The Isolation Forest (IF) algorithm is one of the most widely used approaches for unsupervised anomaly detection due to its efficiency and effectiveness. However, its lack of interpretability limits adoption in real-world, decision-critical contexts.

DIFFI addresses this challenge by introducing feature importance measures for Isolation Forest at both global and local levels:

- Global importance: Identifies which features contribute most to anomaly detection across the dataset.

- Local importance: Explains why a specific instance is considered anomalous by attributing contributions to its features.

Key traits of DIFFI:

- Depth-based method: Uses the depth of splits in isolation trees to quantify importance.

- Low computational cost: Achieves interpretability with significantly lower overhead compared to SHAP and other state-of-the-art interpretability methods.

- Feature selection: Provides a framework for unsupervised feature selection, leading to more parsimonious and potentially more accurate anomaly detection models.

¶ DIFFI

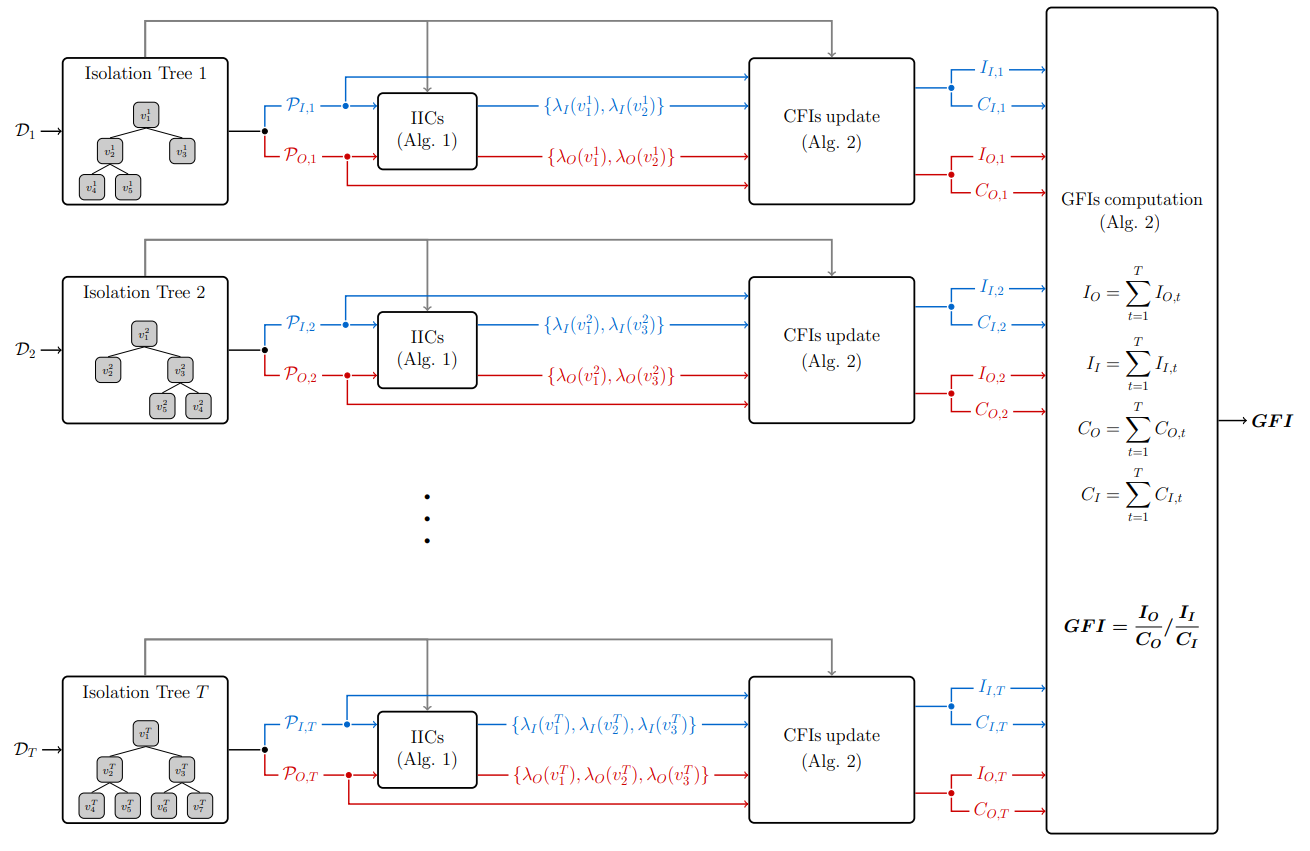

Figure 1 (from the paper) illustrates the workflow of DIFFI:

- Each Isolation Tree produces predictions of inliers and outliers.

- Induced Imbalance Coefficients (IICs) quantify the usefulness of feature splits for anomalies vs. inliers.

- Cumulative Feature Importances (CFIs) are updated along the paths of data points, weighting both split depth (early splits matter more) and imbalance.

- Global Feature Importances (GFIs) are obtained by normalizing CFIs across all features, highlighting which variables are most relevant for isolating anomalies.

¶ Intended uses & limitations

DIFFI is intended for:

- Enhancing interpretability of anomaly detection results produced by Isolation Forest.

- Supporting root cause analysis in multivariate systems.

- Performing unsupervised feature selection for high-dimensional anomaly detection tasks.

- Real-world applications requiring efficient, real-time anomaly detection.

Limitations:

- DIFFI is designed specifically for Isolation Forest; while extensions to other tree-based anomaly detection methods (e.g., Extended IF, SCiForest, Streaming HSTrees) are promising, they remain future work.

- As an interpretability tool, DIFFI complements but does not replace anomaly detection itself.

¶ BibTeX entry and citation info

@article{carletti2023interpretable,

title={Interpretable anomaly detection with diffi: Depth-based feature importance of isolation forest},

author={Carletti, Mattia and Terzi, Matteo and Susto, Gian Antonio},

journal={Engineering Applications of Artificial Intelligence},

volume={119},

pages={105730},

year={2023},

publisher={Elsevier}

}